Hello Reader,

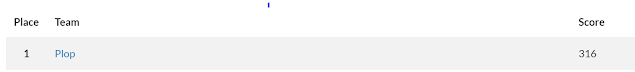

I love weeks when we get to crown new winners. Tun is not new DFIR, you may have seen his tweets before, but he is new to the Sunday Funday winners circle. Tun did some great testing which he documented below specifically for OSX. Give his work a look and join me on congratulating Tun on his win!

The Challenge:

On OSX Mojave, what information can you determine from the KnowledgeC database?

The Winning Answer:

Tun Naung

Sarah Edwards has done quite an extensive research on the knowledge database and wrote a few posts on her blog mac4n6.com. I have never used a Mac before and this is a good opportunity to learn Mac forensics. So, I decided to take the challenge and installed a macOS Mojave in Virtual Box. As a beginner I simply follow the same testing that Sarah did on macOS 10.13 to see the output of macOS 10.14 Mojave.

On macOS 10.14 Mojave, the knowledgeC.db database is found in the same locations as the previous version, macOS 10.13.

/private/var/db/CoreDuet/Knowledge – system context database

~/Library/Application Support/Knowledge – user context database

adams-iMac:~ adam$ sw_vers ProductName: Mac OS X ProductVersion: 10.14

BuildVersion: 18A391

adams-iMac:Knowledge adam$ pwd

/private/var/db/CoreDuet/Knowledge adams-iMac:Knowledge adam$ ls -l total 6120

-rw------- 1 root wheel 774144 Mar 8 04:25 knowledgeC.db

-rw------- 1 root wheel 32768 Mar 8 04:25 knowledgeC.db-shm

-rw------- 1 root wheel 2323712 Mar 8 05:23 knowledgeC.db-wal

adams-iMac:knowledge adam$ pwd

/Users/adam/Library/Application Support/knowledge

adams-iMac:knowledge adam$ ls -l total 1008

-rw------- 1 adam staff 425984 Mar 8 04:28 knowledgeC.db

-rw------- 1 adam staff 32768 Mar 8 04:28 knowledgeC.db-shm

-rw------- 1 adam staff 53592 Mar 8 04:38 knowledgeC.db-wal

From this database, we can get the information about

Application Usage

Application Activities

Safari Browser History

Using the DB Browser for SQLite, we can open the knowledgeC.db database and look at the structure. The database has many tables with many columns.

Note: timestamps in this database use the Mac Epoch time (01/01/2001 00:00:00 UTC).

Figure 1: Database structure of the knowledgeC.db, both system and user context database have 16 tables

Three tables are of particular interest and they store the information which is useful and valuable for an investigator.

ZOBJECT – contains usage entries (Sarah mentioned four weeks, but I could not test as my mac has been running for a week or two only)

ZSOURCE – source of ZOBJECT entries

ZSTRUCTUREDMETADATA – additional metadata associated with ZOBJECT entries

By running the SQLite query on the system context knowledgeC.db database, we get the following

“types”:

SELECT

DISTINCT ZOBJECT.ZSTREAMNAME

FROM ZOBJECT

ORDER BY ZSTREAMNAME

/activity/level

/app/activity

/app/inFocus

/app/usage

/display/isBacklit

/safari/history

For application usage information, we can run the SQLite query below where “/app/inFocus” type will provide what application is being used at a given time.

SELECT

datetime(ZOBJECT.ZCREATIONDATE+978307200,'UNIXEPOCH','LOCALTIME') as "ENTRY CREATION", CASE ZOBJECT.ZSTARTDAYOFWEEK

WHEN "1" THEN "SUNDAY" WHEN "2" THEN "MONDAY" WHEN "3" THEN "TUESDAY" WHEN "4" THEN "WEDNESDAY" WHEN "5" THEN "THURSDAY" WHEN "6" THEN "FRIDAY" WHEN "7" THEN "SATURDAY"

END "DAY OF WEEK", ZOBJECT.ZSECONDSFROMGMT/3600 AS "GMT OFFSET",

datetime(ZOBJECT.ZSTARTDATE+978307200,'UNIXEPOCH','LOCALTIME') AS "START", datetime(ZOBJECT.ZENDDATE+978307200,'UNIXEPOCH','LOCALTIME') AS "END", (ZOBJECT.ZENDDATE-ZOBJECT.ZSTARTDATE) AS "USAGE IN SECONDS", ZOBJECT.ZSTREAMNAME,

ZOBJECT.ZVALUESTRING FROM ZOBJECT

WHERE ZSTREAMNAME IS "/app/inFocus" ORDER BY "START"

-- Result: 697 rows returned in 145ms

Figure 2: Snapshot of the result of SQLite query which show the application usage

Note: the application usage is only for GUI-based applications. User attribution is an issue as this data is from system context database on macOS.

For application activities, we can use “/app/activity” stream type to add more context.

SELECT

datetime(ZOBJECT.ZCREATIONDATE+978307200,'UNIXEPOCH','LOCALTIME') as "ENTRY CREATION", ZOBJECT.ZSECONDSFROMGMT/3600 AS "GMT OFFSET",

CASE ZOBJECT.ZSTARTDAYOFWEEK WHEN "1" THEN "SUNDAY" WHEN "2" THEN "MONDAY" WHEN "3" THEN "TUESDAY" WHEN "4" THEN "WEDNESDAY" WHEN "5" THEN "THURSDAY" WHEN "6" THEN "FRIDAY" WHEN "7" THEN "SATURDAY"

END "DAY OF WEEK", datetime(ZOBJECT.ZSTARTDATE+978307200,'UNIXEPOCH','LOCALTIME') AS "START", datetime(ZOBJECT.ZENDDATE+978307200,'UNIXEPOCH','LOCALTIME') AS "END", (ZOBJECT.ZENDDATE-ZOBJECT.ZSTARTDATE) AS "USAGE IN SECONDS",

ZOBJECT.ZSTREAMNAME,

ZOBJECT.ZVALUESTRING,

ZSTRUCTUREDMETADATA.Z_DKAPPLICATIONACTIVITYMETADATAKEY ACTIVITYTYPE AS "ACTIVITY TYPE",

ZSTRUCTUREDMETADATA.Z_DKAPPLICATIONACTIVITYMETADATAKEY TITLE AS "TITLE",

ZSTRUCTUREDMETADATA.Z_DKAPPLICATIONACTIVITYMETADATAKEY USERACTIVITYREQUIREDSTRI NG AS "ACTIVITY STRING",

datetime(ZSTRUCTUREDMETADATA.Z_DKAPPLICATIONACTIVITYMETADATAKEY EXPIRATIONDATE+9 78307200,'UNIXEPOCH','LOCALTIME') AS "EXPIRATION DATE"

FROM ZOBJECT

LEFT JOIN ZSTRUCTUREDMETADATA on ZOBJECT.ZSTRUCTUREDMETADATA=ZSTRUCTUREDMETADATA.Z_PK

WHERE ZSTREAMNAME IS "/app/activity" or ZSTREAMNAME is "/app/inFocus" ORDER BY "START"

-- Result: 754 rows returned in 207ms

Figure 3: Snapshot of the result of the SQLite query which show more context to the application activity

Even though I did edited the note, activity type data is not populated. Further testing is required for this.

Note: ZSTRUCTUREDMETADATA table has more than 100 columns which is worth looking into to see what data the apps are populating in this table.

For Safari history information, we can run the same query as the previous queries.

SELECT

datetime(ZOBJECT.ZCREATIONDATE+978307200,'UNIXEPOCH','LOCALTIME') as "ENTRY CREATION", CASE ZOBJECT.ZSTARTDAYOFWEEK

WHEN "1" THEN "SUNDAY"

WHEN "2" THEN "MONDAY" WHEN "3" THEN "TUESDAY" WHEN "4" THEN "WEDNESDAY" WHEN "5" THEN "THURSDAY" WHEN "6" THEN "FRIDAY" WHEN "7" THEN "SATURDAY"

END "DAY OF WEEK", ZOBJECT.ZSECONDSFROMGMT/3600 AS "GMT OFFSET",

datetime(ZOBJECT.ZSTARTDATE+978307200,'UNIXEPOCH','LOCALTIME') AS "START", datetime(ZOBJECT.ZENDDATE+978307200,'UNIXEPOCH','LOCALTIME') AS "END", (ZOBJECT.ZENDDATE-ZOBJECT.ZSTARTDATE) AS "USAGE IN SECONDS", ZOBJECT.ZSTREAMNAME,

ZOBJECT.ZVALUESTRING FROM ZOBJECT

WHERE ZSTREAMNAME IS "/safari/history" ORDER BY "START"

-- Result: 57 rows returned in 28ms

Figure 4: Snapshot of the results of the SQLite query which shows the Safari history

Note: if the user clears the browsing history, these entries get removed too. Private Mode browsing does not show up either.

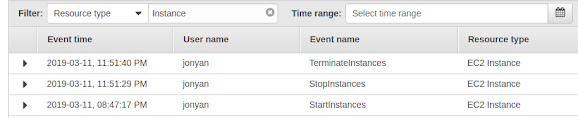

For user correlation, we can run the SQLite query on the user context knowledgeC.db database.

SELECT

DISTINCT ZOBJECT.ZSTREAMNAME FROM ZOBJECT

ORDER BY ZSTREAMNAME

/event/tombstone

/portrait/entity

/portrait/topic

There are a lot more testing to be done as this database contains a lot of information.

- Knowledge is power -

Credits:

https://www.mac4n6.com/blog/2018/8/5/knowledge-is-power-using-the-knowledgecdb-database- on-macos-and-ios-to-determine-precise-user-and-application-usage

https://sqlitebrowser.org/

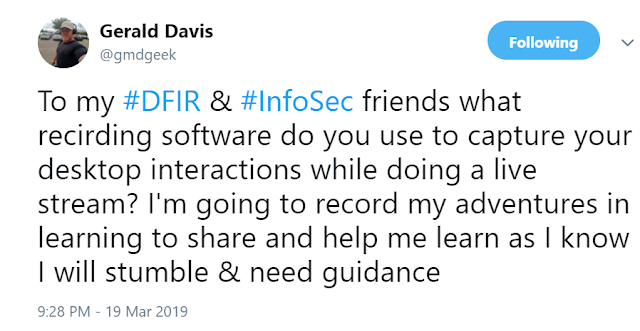

https://www.hecfblog.com/2019/03/daily-blog-636-sunday-funday-3319.html

Also Read: Daily Blog #641

.png)