Hello Reader,

Another challenge has found a victor! Congratulations to Steve M who provided this weeks best answer and really did a good job going into depth. Next week get ready for a full forensic image challenge and read what Steve M has to say today and next year when it appears in print in Hacking Exposed: Computer Forensic 3rd Edition!

The Challenge:

Your suspect has a Windows XP system and you evidence from the User Assist records that he ran CCleaner a month ago, but the count shows it has been run multiple times before. Write out what your methodology would be to determine:

The Winning Answer:

Another challenge has found a victor! Congratulations to Steve M who provided this weeks best answer and really did a good job going into depth. Next week get ready for a full forensic image challenge and read what Steve M has to say today and next year when it appears in print in Hacking Exposed: Computer Forensic 3rd Edition!

The Challenge:

Your suspect has a Windows XP system and you evidence from the User Assist records that he ran CCleaner a month ago, but the count shows it has been run multiple times before. Write out what your methodology would be to determine:

- If system cleaning took place

- If wiping took place

- What is now missing

The Winning Answer:

From Steve M

The UserAssist key on the suspected system indicates CCleaner was run one month ago, but the count indicates it has been run more than once. Here is how I would answer the questions outlined in the contest:

1) If system cleaning took place

System Cleaning, by default, will remove files from several locations including browser specific Temporary Internet Files, Cookies, and histories. The date of last execution specified in the UserAssist registry key can be considered a checkpoint, for which we can investigate if the system activity only appears to happen after the date. For example, if the suspect system only has cookies being created after the date CCleaner was last run, it would serve as a strong indicator that system cleaning took place.

To determine the options enabled when CCleaner was last run, I would look at "HKCU\Software\Piriform\CCleaner" for the user who last used the software. Each option has a registry key that enables/disables the checkbox in the GUI, and would be a good indicator of what options were run the last time the application was used. It is completely possible the suspect modified the settings for the last run or enabled/disabled settings without actually performing a clean, so these should not be considered hard evidence.

To determine if the user had run system cleaning based on disk contents, I would look for the presence (and contents) of the following files:

Internet Explorer:

C:\Documents and Settings\\Local Settings\Temporary Internet Files\Content.IE5\index.dat & subdirectories

C:\Documents and Settings\\Cookies

C:\Documents and Settings\\Local Settings\History\History.IE5

Chrome:

C:\Documents and Settings\\Local Settings\Application Data\Google\Chrome\User Data\

FireFox:

C:\Documents and Settings\\Local Settings\Application Data\Mozilla\Firefox\Profiles\ \cookies.sqlite

C:\Documents and Settings\\Local Settings\Application Data\Mozilla\Firefox\Profiles\ \downloads.sqlite

C:\Documents and Settings\\Local Settings\Application Data\Mozilla\Firefox\Profiles\ \places.sqlite

C:\Documents and Settings\\Local Settings\Application Data\Mozilla\Firefox\Profiles\ \search.sqlite

These artifacts can be investigated using commercial products (Internet Evidence Finder, ChromeAnalysis Plus, Encase) or free tools (Redline, Galleta, Pasco). Presence only of files created after the CCleaner execution date would indicate the system cleaning took place. Lack of files or directories would warrant further investigation to determine if the system is commonly used for web browsing.

Additionally, by default CCleaner will clear certain Windows logs as well. Specifically, logs in C:\Windows\system32\wbem\logs can be inspected to see the earliest entries. If the earliest entries all appear after the last execution time of CCleaner, it is likely these logs were wiped via the system cleaning process as well.

2) If wiping took place

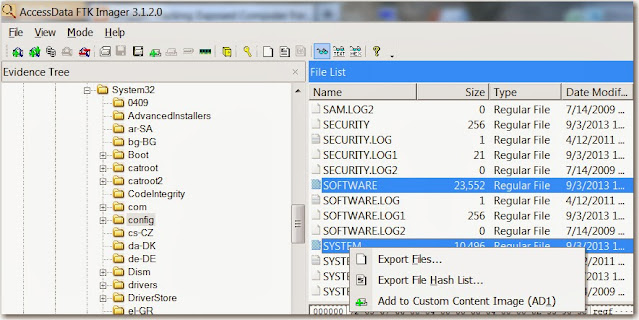

CCleaner offers a free space wiping utility as well, which will identify all unallocated clusters and fill them with "0"'s (nulls). To identify this has been performed, a low level disk analysis tool can be used (Encase, dd, etc). Specifically, viewing the a hex dump of the contents of any unallocated clusters will show them as containing null characters instead of miscellaneous undeleted data. While some of the wiped clusters may now have data from activity after the running of the CCleaner tool, it is unlikely the majority of them will given today's large capacity disks. Therefore, viewing several unallocated clusters at random should give a good indication if they have been zero'd out or not.

Additionally, CCleaner appears to store the settings used upon last execution in "HKCU\Software\Piriform\CCleaner", so the investigator could extract the suspect user's registry hive and search for "(App)Wipe Free Space" to see if the checkbox is checked or not (it is "False" by default, meaning "wipe free space" is disabled). Also, the investigator could potentially launch the CCleaner.exe executable on a mirror copy of the drive as the user who last executed it and determine if "Wipe Free Space" was checked (it is not by default). This would provide good confidence if the data agreed, but itself would not be substantial to say that wiping had been performed since you don't need to complete the action for the preference to persist.

3) What is now missing

Assuming CCleaner was run to perform system cleaning and wipe free space on the sole physical disk drive, important artifcacts regarding browsing history, system restore points, and deleted but not overwritten files may now be unrecoverable. Therefore, it may be much more dificult for an investigator to find the information they are looking for (as per the product's intentions). However, some of these artifacts may be recovered by examining the system's pagefile (stored in memory during the execution of CCleaner, then flushed to disk), slack space on the disk (not overwritten by "wipe free space"), off-device backups to either a network storage device or external media, and proxy solutions which are typically deployed in an enterprise. The analysis will be harder, but not necessarily impossible.

Also Read: Daily Blog #98