By Matthew Seyer

As many of you know, David Cowen and I are huge fans of file system journals! This love also includes all change journals designed by operating systems such as FSEvents and the $UsnJrnl:$J. We have spent much of our Dev time writing tools to parse the journals. Needless to say, we have lots of experience with file system and change journals. Here is some USN Journal logic for you.

The issue is that many forensics tools cannot export a file out as a sparse file. Even if they could only a few file systems support them and I don’t even know if a sparse file on NTFS is the same as a sparse file on OSX. But this leads to a common problem. The forensic tool sees the $J as larger than it really is:

As many of you know, David Cowen and I are huge fans of file system journals! This love also includes all change journals designed by operating systems such as FSEvents and the $UsnJrnl:$J. We have spent much of our Dev time writing tools to parse the journals. Needless to say, we have lots of experience with file system and change journals. Here is some USN Journal logic for you.

USN Journal Logic

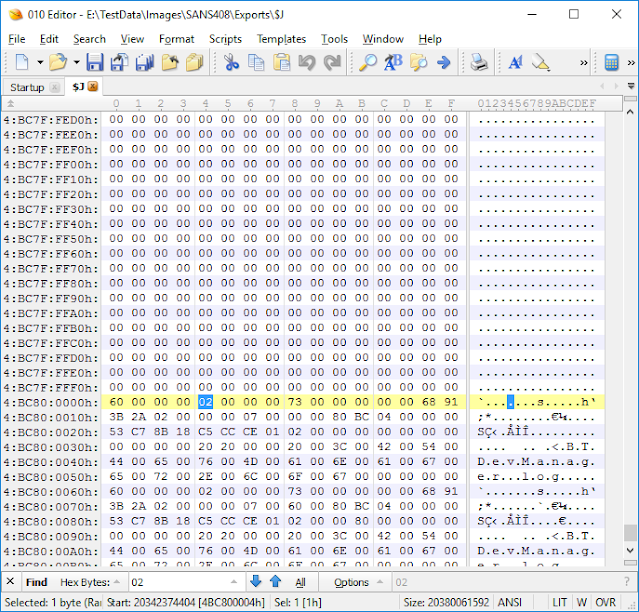

First off it is important to know that the USN Journal file is a sparse file. MSDN explains what a sparse file is: https://msdn.microsoft.com/en-us/library/windows/desktop/aa365564(v=vs.85).aspx. When the USN Journal (referred to as $J from here on out) is created it is created with a max size (the area of allocated data) and an allocation delta (the size in memory that stores records before it is committed to the $J on disk). This is seen here: https://technet.microsoft.com/en-us/library/cc788042(v=ws.11).aspx.The issue is that many forensics tools cannot export a file out as a sparse file. Even if they could only a few file systems support them and I don’t even know if a sparse file on NTFS is the same as a sparse file on OSX. But this leads to a common problem. The forensic tool sees the $J as larger than it really is:

While this file is 20,380,061,592 bytes in size, the allocated

portion of records is much smaller. Most forensic tools will export out the entire

file with the unallocated data as 0x00. Which makes sense when you look at the

MSDN Sparse File section (link above). When we extract this file out with FTK

Imager we can verify with the windows command `fsutil sparse` to see that the exported file is not a sparse file (https://technet.microsoft.com/en-us/library/cc788025(v=ws.11).aspx):

Trimming the $J

Once its exported out what’s a good way to find the start of the records? I like to use 010 Editor. I scroll towards the end of the file where there are still empty blocks (all 0x00s) then I search for 0x02 as I know I am looking for USN Record version 2:

Now if I want to export out just the record area I can start at the beginning of this found record and select to the end of the file and save the selection as a new file:

The resulting file is 37,687,192 bytes in size and contains

just the record portion of the file.

This is significantly smaller in size! Now, how do we go about this programmatically?

Automation

While other sparse files can have interspersed data, the $J sparse file keeps all of its data at the end of the file. This is because you can associate the Update Sequence Number in the record to the offset of the file! If you want to look at the structure of the USN record here it is: https://msdn.microsoft.com/en-us/library/windows/desktop/aa365722(v=vs.85).aspx. Now I will note that I would go about this two different ways. One method for a file that has been extracted out from one tool and a different method for if it would be extracted out using the TSK lib. But for now, we will just look at the first scenario.

Because the records are located in the lasts blocks of the file, I would start from the end of the file and work our way backwards to find the first record, then write out just the records portion of the file. This saves a lot of time because you are not searching through potentially many gigs of zeros. You can find the code at https://github.com/devgc/UsnTrimmer. I have commented the code so that it is easy to understand what is happening.

Now lets use the tool:

We see that the usn.trim file is the same as the one we did manually but lets check the hash to make sure we have the same results as the manual extract:

So far I have verified this on SANS408 image system $J extract and some local $J files. But of course, make sure you use multiple techniques to verify. This was a quick proof of concept code.

Questions? Ask them in the comments below or send me a tweet at @forensic_matt

Questions? Ask them in the comments below or send me a tweet at @forensic_matt